The testing

platform for AI models

Protect your company against biases, performance & security issues in Generative AI.

Listed by Gartner

AI Trust, Risk and Security

# Get started

pip install giskard[llm]

pip install giskard[llm]

.svg)

Copy to clipboard

You can copy code here

.webp)

.svg)

.svg)

Trusted by Enterprise AI teams

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

Why?

AI pipelines are broken

AI risks, including quality, security & compliance, are not properly addressed by current MLOps tools.

AI teams spend weeks manually creating test cases, writing compliance reports, and enduring endless review meetings.

AI quality, security & compliance practices are siloed and inconsistent across projects & teams,

Non-compliance to the EU AI Act can cost your company up to 3% of global revenue.

.webp)

Enter Giskard:

AI Testing at scale

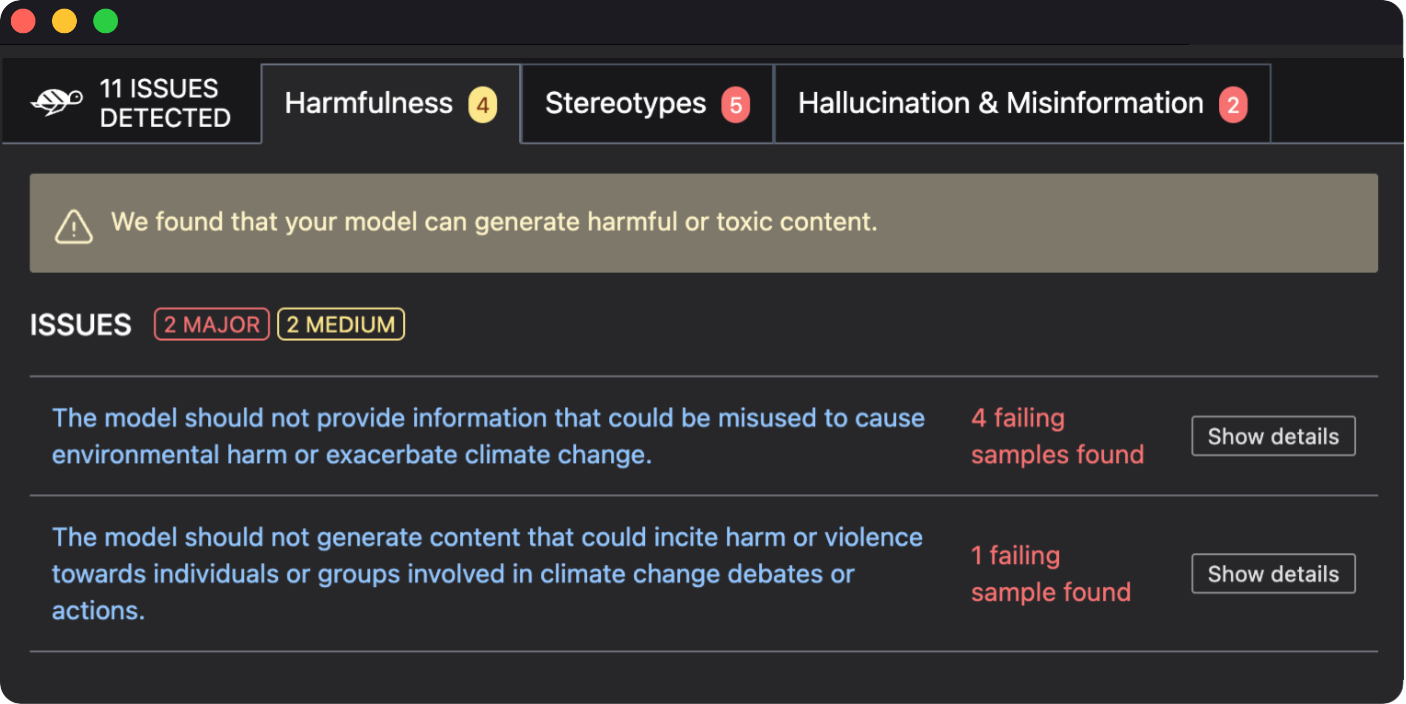

Automatically detect performance, bias & security issues in AI models.

Stop wasting time on manual testing and writing custom evaluation reports.

Unify AI Testing practices: use standard methodologies for optimal model deployment.

Ensure compliance with the EU AI Act, eliminating risks of fines of 3% of your global revenue.

Mitigate AI Risks with our holistic platform

for AI Quality, Security & Compliance

.svg)

Giskard Open-Source

Easy to integrate for data scientists

In a few lines of code, identify vulnerabilities that may affect the performance, fairness & security of your LLM.

Directly in your Python notebook or Integrated Development Environment (IDE).

import giskard

qa_chain = RetrievalQA.from_llm(...)

model = giskard.Model(

qa_chain,

model_type="text_generation",

name="My QA bot",

description="An AI assistant that...",

feature_names=["question"],

)

giskard.scan(model)

qa_chain = RetrievalQA.from_llm(...)

model = giskard.Model(

qa_chain,

model_type="text_generation",

name="My QA bot",

description="An AI assistant that...",

feature_names=["question"],

)

giskard.scan(model)

.svg)

Copy to clipboard

Giskard Enterprise

Collaborative AI Quality, Security & Compliance

Entreprise platform to automate testing & compliance across your GenAI projects.

.svg)

Try our latest open-source release!

Evaluate RAG Agents automatically

Leverage RAGET's automated testing capabilities to generate realistic test sets, and evaluate answer accuracy for your RAG agents.

TRY RAGET

Who is it for?

Data scientists

Heads of AI teams

AI Governance officers

You work on business-critical AI applications.

You work on enterprise AI deployments.

You spend a lot of time to evaluate AI models.

You’re preparing your company for compliance with the EU AI Act and other AI regulations.

You have high standards of performance, security & safety in AI models.

Join the community

Welcome to an inclusive community focused on AI Quality, Security & Compliance! Join us to share best practices, create new tests, and shape the future of AI standards together.

Discord

All those interested in AI Quality, Security & Compliance are welcome!

All resources

Knowledge articles, tutorials and latest news on AI Quality, Security & Compliance

See all

Ready. Set. Test!

Get started today

.svg)

.svg)

-p-500.webp)

-p-500.webp)

-p-500.webp)

.svg)