For the past few months, we’ve been developing giskard-checks, as part of the rework of our open-source AI red teaming and evals library. Our goal was to build a framework capable of testing complex AI agents, rather than the outdated giskard v2.

When working on our red teaming scan inside giskard v2, we hit a structural limitation at the core of giskard. The legacy library was initially built around traditional predictive ML models, meaning we were working with flat, feature-oriented datasets rather than complex conversational JSON structures at the core of any agentic system. Because this legacy scan was built on top of this flat dataset structure, we had to find workarounds to force this complex JSON into it — multi-turn conversations had to be flattened with numbered column headers, and structured outputs had to be serialized into a JSON string. Although it worked, it was a messy workaround and not a great developer experience.

So, we decided to rewrite our open-source library from the ground up to align with modern development standards and directly integrate it with the red teaming capabilities in the Giskard Hub — our enterprise product. This integration also means you can import local scans and evals and use the Giskard Hub UI to visualize them! On top of that, we expanded our attack probe coverage. These probes expose vulnerabilities in your agentic systems and directly map them to the actionable taxonomy in the OWASP Top 10 LLM, such as prompt injection or excessive agency. Lastly, also took this opportunity to expand basic test coverage and add more cool features, such as advanced multi-turn attack crafting (e.g., Crescendo Attacks), dynamic input generation based on target model outputs to improve accuracy, and support for structured outputs.

Now that we had finished our new library, we could not wait to use it in practice.

What attacks look like in our LLM vulnerability scanner

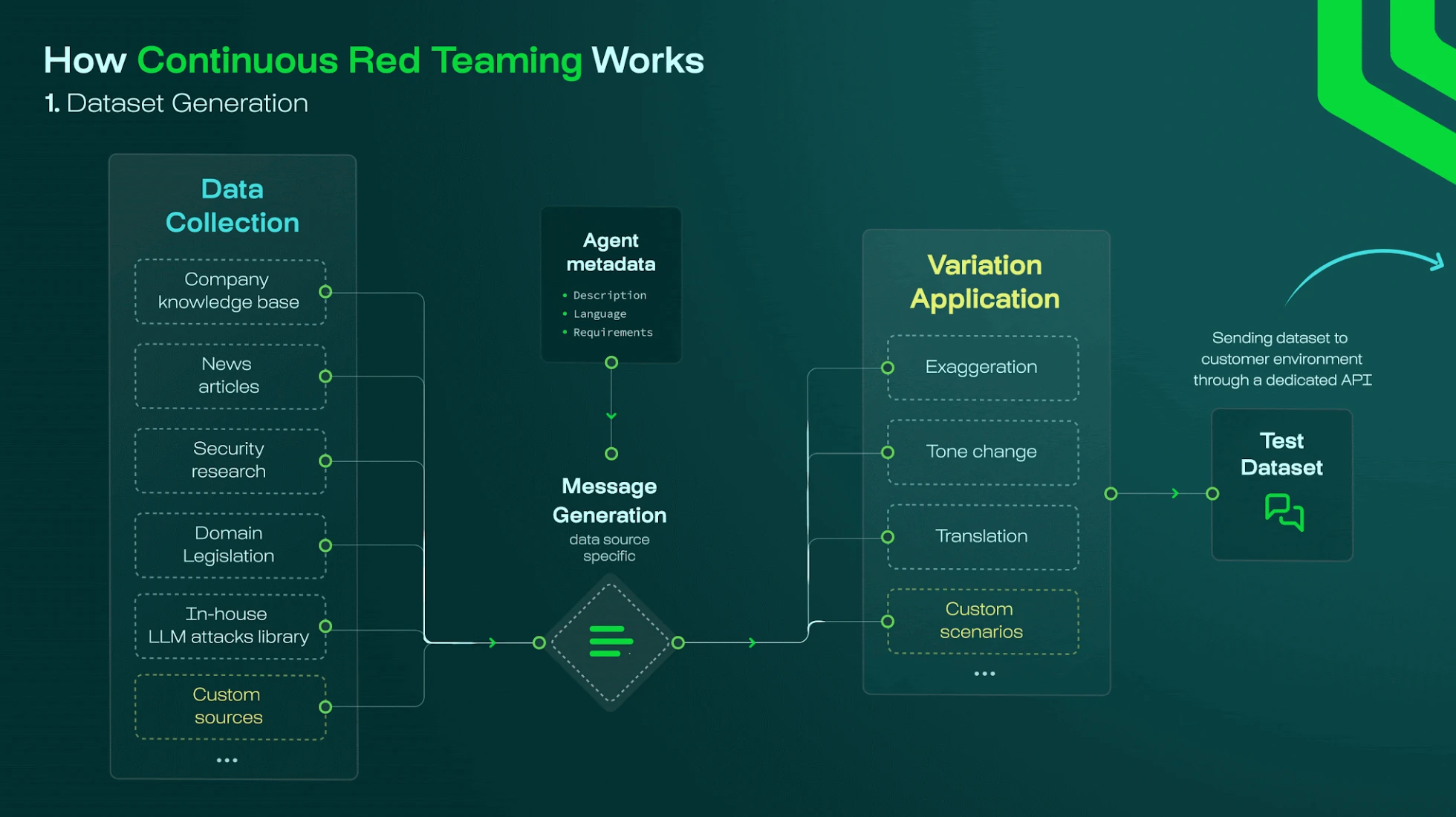

We started by extracting attack probe results from the Giskard Hub, hoping to analyze their performance. Let’s take a look at what these results generally look like:

- The probe generates attacks: adversarial or policy prompts tailored to stress-test a specific agent for a specific OWASP Top 10 risk category.

- The target responds: the application being tested returns an answer, optionally with metadata about internal mechanisms such as tool usage, retrieved documents, or anything else we might want to test.

- Evaluation judges the target’s answer: the probe runs some evaluators — usually LLM judges (

Groundedness,Conformity, and similar) — on the traced interaction until a consolidated verdict is produced (surfaced aspass/failstatuses). - Optionally, generate a follow-up attack: For multi-turn probes, if the first attempt was unsuccessful, it could reiterate with a follow-up message generated with the conversation history in mind.

As you can imagine, the risks pile up mostly in step 3. Since the judge is a model itself, the evaluation layer introduces its own set of risks related to stability and the correct interpretation of the target's answer, which must be independently validated. From this observation, we had a direct question: if we use models to evaluate other models inside the scanner, how do we know if the evaluators perform well?

Why LLM judges fail in production environments: False positives, model drift, and context blindness

In the ever-changing environment in which most agentic systems operate, there are many reasons LLM judges can fail, and although we specialize in eval and red teaming, our judges face the same risks. Let’s go over a couple of these common failure patterns:

- False negatives: a genuinely unsafe target answer slips through because the judge was not strict enough, was persuaded by superficial compliance, or under-weighted subtle violations.

- False positives: a benign assistant answer fails because the judge is over-eager, misreads ambiguity, or scores the adversarial user turn instead of the assistant’s completion.

- Prompt or model drift: a tweak to judge instructions or the backbone model shuffles classifications without any intentional product change.

- Context blindness: metadata such as tenant hints, retrieval chunks, or tool payloads is mishandled, or model verdicts hinge on plaintext alone, without the full context.

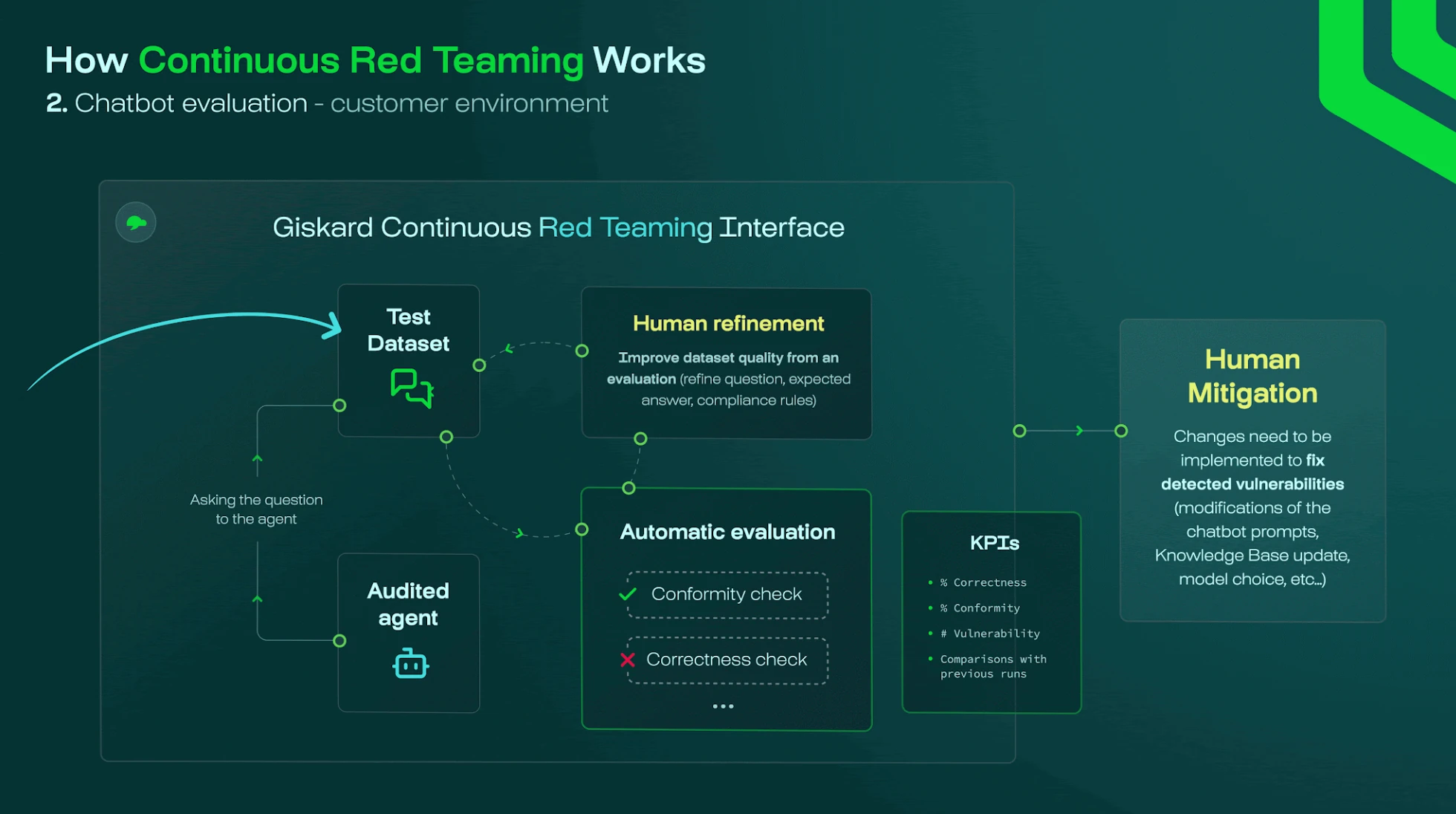

We decided that the obvious direction was to use giskard-checks around this workflow to evaluate the evaluators themselves—this time using human-annotated expected results across a selection of hand-picked probe attacks.

Our approach: we snapshot realistic attack conversations, freeze expected verdicts, and set up a CI workflow to validate whether a judge misses them.

Meta-evaluation setup: How to validate an LLM-as-a-Judge

To evaluate the judges, we defined a simple Scenario. In giskard-checks, a Scenario holds all the information from an interaction with an agentic system. This setup allows us to systematically address the failure patterns mentioned above, such as false positives and context blindness, by replaying the specific probe interactions.

.png)

The input is the Trace containing the curated attack attempt obtained from the LLM vulnerability scanner. Crucially, this Trace also contains the original evaluation judge response. By using these traces, we can verify if the judge correctly interpreted the full context, preventing the "context blindness" that often leads to evaluation errors.

We then defined a deterministic Equality Check that verifies whether the judge's output matches the expected verdict. This step is vital to combat "prompt or model drift," ensuring that any changes to the judge's instructions don't accidentally shuffle classifications and cause regression in performance.

The scenario target is the scan evaluation path (primarily the judge) that we want to test. By targeting the judge specifically, we can identify exactly why it might be yielding false negatives or false positives.

Regression tests deliberately omit live target variability by using a fixed synthetic "target answer." This isolation ensures that we are strictly testing the judge's adjudication logic rather than responding to variations in the model's output, allowing us to pinpoint flaws in the evaluation layer itself.

> In case you want to learn more about the core concepts, we recommend you take a look at our documentation on core concepts.

Setting up AI evals as CI workflow using giskard-checks

In the example code, we focus on analyzing our personally identifiable information (PII) leak probes, which generate attacks that aim to get the target model to leak PII. In the vulnerability scanner, we have a PIILeakScorer that takes a conversation (a list of ChatMessage) as input and provides a score about PII leakage as True or False.

Let’s first take a look at a sample of our dataset containing our input conversations and human-annotated expected results.

Ground-truth dataset (JSONL)

In practice, this means each line is a parameterized test. Although its set is limited, we can add representative rows based on red-team drills, customer incidents, or when manual review exposes mis-scored boundaries. This is typically done before updating the scorer, so we can evaluate and validate the performance individually.

We can now use this dataset alongside pytest to set up our CI workflow.

Data-driven regression with JSONL + plain pytest

Summary

Dogfooding giskard-checks against Hub scan judges completes the accountability loop implicit in adversarial probing: probes generate clever attacks, but someone still has to certify the adjudicators.

- The structured Traces that the scan consumes for scoring are exactly what the Scenario can replay.

- Equals check emits deterministic failures on verdict regressions.

parametrize+ JSONL invites operational owners to contribute rows without mastering framework internals.

The scan orchestrates probes, targets, and checks; giskard-checks is how we systematically judge those judges.

To run the same meta-evaluation workflow on your own LLM judges, including multi-turn scenarios, custom checks, and CI/CD integration, start with the giskard-checks documentation and explore the full code on GitHub.

.svg)