⚠️ Note: Giskard v3 is currently in Pre-release (Beta). We are actively refining the APIs and welcome early adopters to provide feedback and report issues as we move toward a stable 3.0.0 release.

Why the Change?

While Giskard v2 served thousands of developers (directly, or indirectly through Databricks AI Red-teaming toolkit), it was built during a different era of machine learning. We decided to undertake a major refactoring for two primary reasons:

- Dependency Management: v2 was heavily dependent on a vast array of ML libraries (like scikit-learn, PyTorch, and pandas), which often led to "dependency hell". This made the library hard to maintain and caused frequent versioning conflicts for users.

- Structural Limitations: The previous architecture was centered around a tabular dataset structure. This format was poorly suited to LLM-based applications, particularly modern chatbots that require multi-turn conversation and complex context handling.

What's New in v3?

Giskard v3 is a fresh rewrite that moves away from the monolithic design of Giskard v2 in favor of a modular, package-based ecosystem. This ensures you install only what you need, keeping your environments clean and lightweight.

- Modular Packages: We've split the core functionality into focused libraries:

- giskard-checks: A composable library for testing AI agents, from simple assertions to dynamic multi-turn scenarios.

- giskard-agents: A framework to create an agent workflow supporting tool calls.

- Data Structure Agnostic Design: Instead of forcing agents into tabular structures, v3 treats the AI System as a core abstraction with an API that can wrap any data structure. From a simple classifier to a complex, multi-step agentic pipeline.

Introducing Scenarios

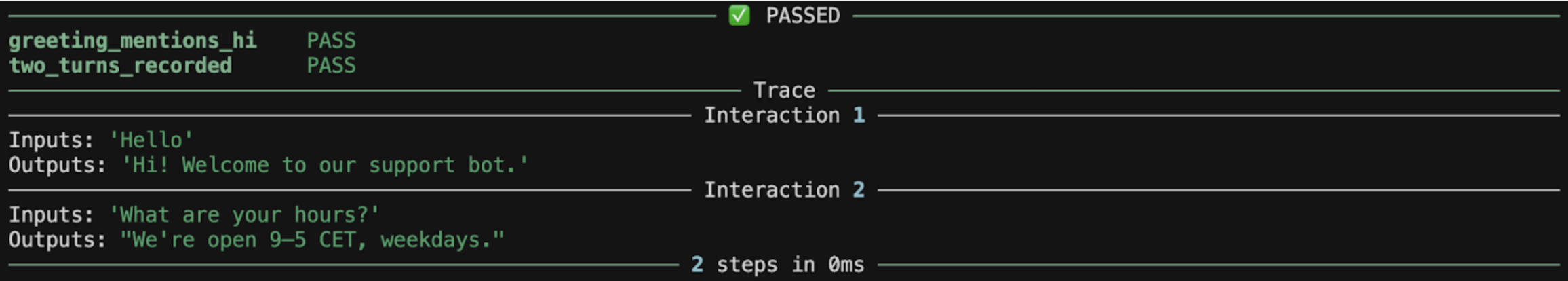

The main feature introduced by giskard-checks is the scenario: an ordered sequence of interactions and checks. Each step can build on the conversation so far, so you exercise multi-turn flows the way users actually experience them. Execution stops at the first failing check, which makes regressions easy to spot.

The example below chains two turns; after each turn, a small predicate check runs on the accumulated trace. In practice you would plug in real model outputs (and richer checks such as groundedness or LLM-as-judge) instead of fixed strings.

import asyncio

from giskard.checks import Scenario, from_fn

test_scenario = (

Scenario("conversation_flow")

.interact(inputs="Hello", outputs="Hi! Welcome to our support bot.")

.check(

from_fn(

lambda trace: "Hi" in trace.last.outputs,

name="greeting_mentions_hi",

)

)

.interact(inputs="What are your hours?", outputs="We're open 9–5 CET, weekdays.")

.check(

from_fn(

lambda trace: len(trace.interactions) == 2,

name="two_turns_recorded",

)

)

)

result = asyncio.run(test_scenario.run())

result.print_report()

You can also check our documentation to find more complex examples on real-life use cases.

The Roadmap: What's Next?

The launch of v3 is just the beginning. Our team is working hard on several key updates to complete the ecosystem:

- RAG evaluation: We are migrating our popular RAG Evaluation Toolkit (RAGET) to the v3 architecture. This will enhance synthetic data generation and information retrieval benchmarking in the new modular framework.

- Improved LLM Vulnerability Scan: We've been working on a new LLM-focused vulnerability scan that we can't wait to share! This scanner will feature OWASP-categorized security checks and support for both single-turn and multi-turn red-teaming scenarios.

- Integration with Giskard Hub: We are tightening the link between our open-source tools and Giskard Hub, our enterprise platform. This will allow teams to seamlessly move from local testing to collaborative red-teaming, version control, and continuous monitoring in a unified UI.

Get Involved

Giskard v3 is open-source and built for the community. You can explore the new code, check out the detailed roadmap, and start testing your agents today:

- Explore the Documentation: docs.giskard.ai/oss/

- Star the Repository: github.com/giskard-AI/giskard-oss

- Join the Conversation: Reach out via GitHub or join our Discord channel to share your feedback as we refine the Giskard ecosystem!

We can't wait to see what you build and test with v3!

.svg)

.webp)