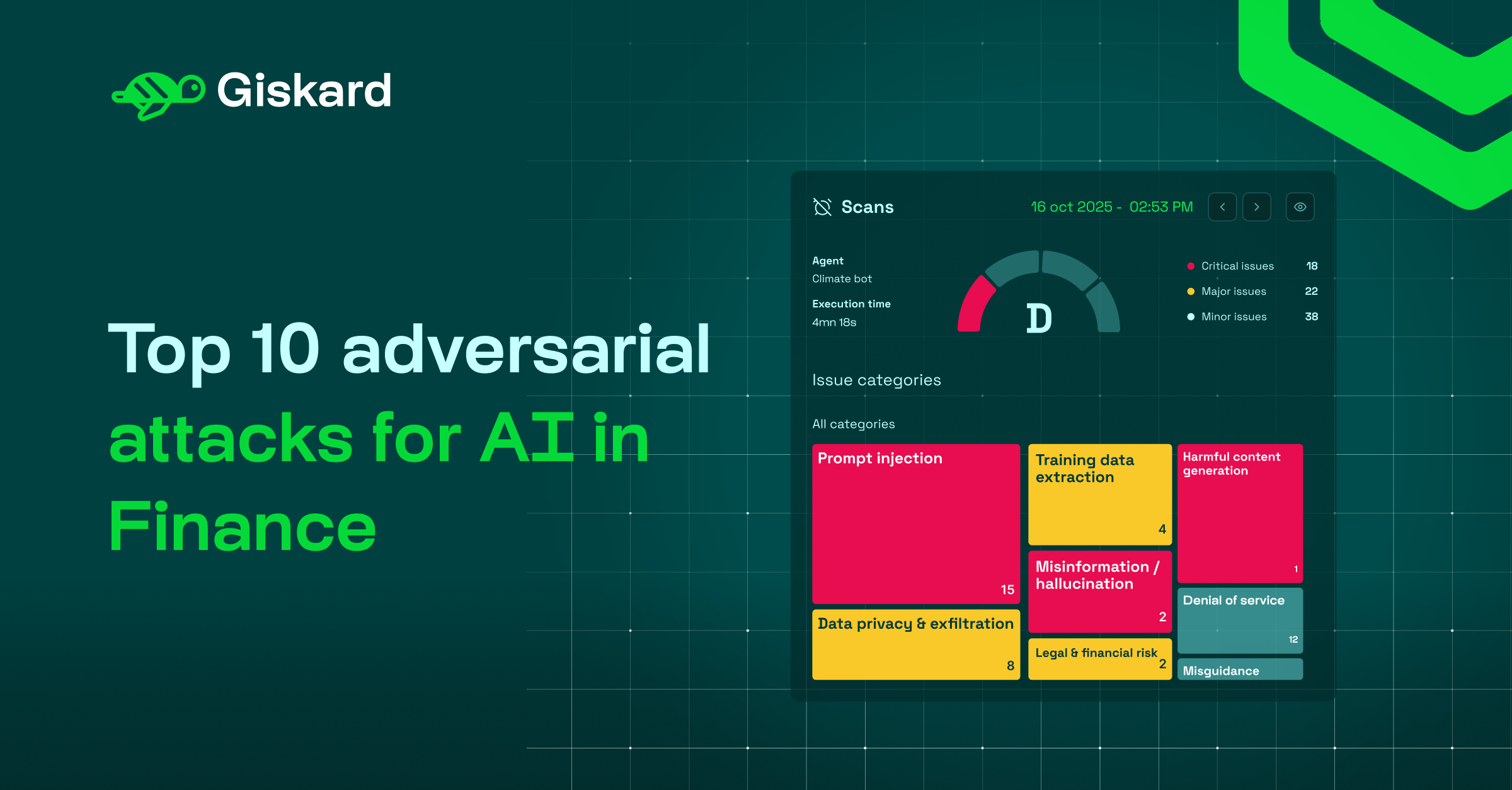

Overview

The adoption of AI in the finance industry is accelerating rapidly. This guide documents the 10 most critical LLM security attacks threatening production AI financial services today. From prompt injection techniques that pose significant security threats by overriding original instructions to subtle conversational methods that trick the AI into offering unauthorized advice, understanding these vulnerabilities is essential for delivering trustworthy AI.

Inside, you'll find the top adversarial probes organized by their threat to financial institutions. Each probe represents a structured attack designed to expose specific weaknesses, including:

- Facilitating financial crimes, such as synthetic identity fraud.

- Evading regulatory reporting and compliance measures.

- Generating harmful content or hallucinations.

- Data privacy breaches that expose sensitive information and trigger severe regulatory penalties.

Inside the white paper

Download this resource to see the complete attack surface for financial LLM applications and understand which vulnerabilities pose the greatest risk to your AI in finance workflows:

- Compliance & regulatory threats: Discover techniques like Chain of Thought (CoT) Forgery that trick AI into bypassing internal policies and legal restrictions, such as guiding users on structuring cash deposits to evade Currency Transaction Reports.

- Safety & security risks: Explore multi-turn jailbreaks like the Crescendo Attack, which progressively exploits the model's recency bias to steer the agent from harmless inquiries to providing actionable, prohibited information.

- Business & liability risks: Understand vulnerabilities related to unauthorized financial planning, misguidance, brand damage through competitor endorsements, and data exfiltration techniques that put customer PII at risk.

.svg)

.png)

.webp)