Difference between Shadow AI and Shadow IT

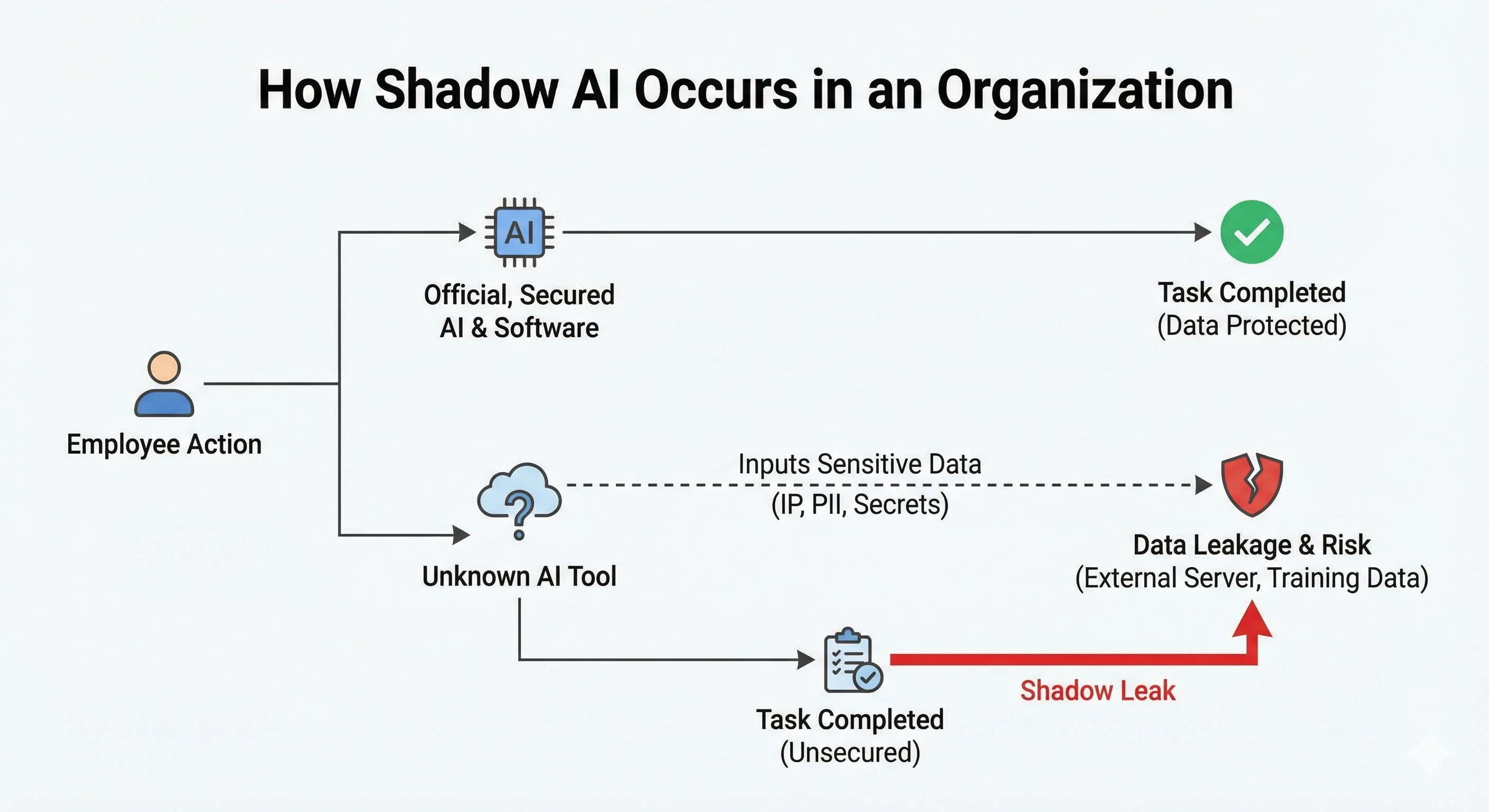

Shadow AI is the use of AI tools, like ChatGPT, Copilot, Gemini, Claude, and other LLMs or conversational AI agents, by employees without IT approval, monitoring, or data governance oversight. While it shares the same unofficial nature as Shadow IT (the unapproved use of traditional software or hardware), Shadow AI introduces a whole new set of unique risks. Shadow IT risks are typically contained to unauthorized network access or siloed storage, whereas Shadow AI involves deep complexities tied to how AI models handle sensitive data, train on user inputs, and generate outputs that actually influence business decisions.

According to Netskope, 72% of enterprise GenAI use is shadow IT, driven by employees accessing AI apps through personal accounts. This means they are completely bypassing company security monitoring and data loss prevention (DLP) mechanisms (yes, that’s a big deal!).

Shadow AI risks: data leakage, compliance, and data governance

When we sit down with users to talk about this, it quickly becomes clear that Shadow AI can manifest in a few different, scary ways:

- Data leakage: Employees copy-paste customer data, proprietary code, strategy documents, and other sensitive material into third-party AI services (often using free or personal-tier accounts). Traditional DLP doesn't cover this vector, so it stays totally invisible to security teams. Many of these services use your input data for model training, potentially leaking your IP into the wild when their next-generation model is released to the public.

- Compliance exposure: Shadow AI sidesteps GDPR, HIPAA, SOC 2, and internal data governance entirely. Because unsanctioned tools operate outside of the company’s controlled environments, there are zero guarantees on how data is processed, stored, or shared. Believe me, this lack of visibility severely limits a company's ability to respond to data privacy requests or prove compliance during an audit, opening the door to massive fines and reputational damage.

- Unvalidated decisions: Unvetted models and AI services may produce hallucinations and biased outputs that feed into business decisions with no audit trail and absolutely no accountability. When employees rely on these unverified, "shadow" outputs, they introduce flawed information into critical business workflows. Because this happens in the dark, there is no way to trace how a specific poor decision was influenced by an AI hallucination or a poisoned model.

The Samsung ChatGPT shadow leak

For anyone who has worked in security, you probably know the famous Samsung story. In March 2023, Samsung engineers pasted proprietary semiconductor source code into ChatGPT to debug it. We're talking three separate incidents in under 20 days! One engineer entered code for identifying defective chips, while another uploaded meeting transcripts. The data was ingested into OpenAI's training pipeline, meaning their proprietary designs effectively became part of the public domain.

Samsung's immediate response was a company-wide ban on generative AI tools. However, as many organizations are discovering, banning AI is a costly mistake. What happened? Employees simply moves off-network, using personal devices to access the tools they need to hit their performance goals. These bans don’t stop the behavior; they just drive it further underground into a "Shadow AI economy," eliminating any remaining IT visibility. In fact, a report by IBM shows that breaches involving Shadow AI cost an average of $670,000 more than standard breaches because security teams have no logs to trace or alerts to trigger.

{{cta}}

AI security best practices: How to prevent Shadow AI

So, how do we solve this? To effectively combat Shadow AI, organizations need to transition from a mindset of prohibition to one of orchestration. AI tools genuinely help people do their work, and the pressure to adopt them is enormous. Plus, enforcing enterprise-wide bans is hard: while common services can be blocked at the firewall level, the AI landscape expands faster than any blocklist can keep up with. More importantly, banning without providing effective alternatives could have the counterproductive effect of pushing for more "underground" usage and signaling to top talent that your organization is lagging behind in innovation.

A more effective approach has three parts:

1. Gain visibility

Understanding how employees actually use AI tools across the organization is the essential first step. It's important to build a clear picture of what tools are in use and what data flows through them, but also of the business motivations pushing employees to use these services. Setting up the right incentives for employees to safely report the tools they use without penalty is key.

2. Offer secure alternatives

People reach for a shadow AI app because it solves real problems. Enterprise-grade AI platforms with proper data isolation, access controls, and audit logging provide a sanctioned path that doesn't require sacrificing productivity. If the approved option is harder to use than a personal ChatGPT account, adoption will simply fail.

3. Implement policies with runtime AI guardrails

Written policies matter, but enforcement has to happen at the infrastructure level. Runtime controls can detect and mask sensitive data before it reaches external models: detecting PII, source code, or confidential material in prompts right at the point of interaction.

Here at Giskard, this is exactly what we've been building! Giskard provides automatic PII filtering that sits between users and AI services, preventing sensitive data from ever leaving your organization's environment. Our real-time guardrails operate under strict latency constraints to protect against malicious inputs and well-known vulnerabilities like the OWASP top 10.

Implementing effective guardrails with Giskard involves three primary layers of protection:

- Safety Guardrails: Prevent the generation of harmful, biased, or inappropriate content that could damage organizational integrity, ensuring interactions remain friendly and respectful.

- Compliance Guardrails: Ensure strict adherence to domain-specific and regulatory standards. This is vital for safe deployment in highly regulated industries like finance and healthcare.

- Security Guardrails: Protect against adversarial threats such as prompt injections, preventing users from manipulating the model to reveal sensitive PII or expose internal enterprise systems.

For a complete safety strategy throughout the AI lifecycle, organizations should combine these lightweight real-time guardrails with regular batch evaluations. This creates an adaptive feedback loop: evaluations empirically discover vulnerabilities, and those insights are subsequently used to configure targeted real-time guardrails so you aren't just guessing what to protect against.

Conclusions

So yes, as you can probably guess, Shadow AI is already happening in most organizations. The question is not whether to adopt AI, but whether to let adoption happen without oversight. Visibility, secure alternatives, and runtime guardrails are the absolute foundation of any serious AI governance strategy.

Giskard Guards is currently in beta to help organizations implement these essential runtime protections. If you are interested in securing your LLM agents (and keeping your data safe!), contact us today to request a trial :)

.svg)

.png)

.webp)

.png)